AI-First Universities: Salesforce Education Cloud & Agentforce

Discover how Salesforce Education Cloud, Data Cloud, and Agentforce enable AI-driven student engagement and intelligent campuses with Altimetrik.

Data Reliability Engineering (DRE) introduces a modern approach to achieving high-quality data without unnecessary toil, ensuring a balance between velocity and reliability in data platforms.

Data plays a critical role as it fuels decision-making across various organizational teams, including Product, Marketing, Finance, Security, Innovation, and Development. Reliable data is the backbone of informed, data-driven business decisions and successful outcomes.

Bad data is a costly issue. According to Gartner, organizations lose an average of $12.9M annually due to bad data’s impact on productivity, downtime, decision-making, revenue, and reputation.

Some of the key risks associated with poor data quality include:

Understanding why data quality is critical requires examining the evolution of the data ecosystem and engineering practices. Below is an overview of the key contributing factors:

Infrastructure

Looking back two decades (2000–2005), software engineering was still in its infancy alongside the emergence of cloud infrastructures like Git, AWS, GCP, and Azure. These platforms evolved to scale with organizational SDLC needs. Over the last decade, technologies like Snowflake, Amazon Redshift, Google BigQuery, and Databricks have become foundational for cloud-based data engineering infrastructure.

Frameworks

In software engineering, tools such as Gradle, Node.js, Selenium, and Jenkins accelerated coding and microservices development while leveraging cloud infrastructure efficiently. Similarly, in the data domain, frameworks like Apache Airflow, Fivetran, dbt, TensorFlow, DAGster, Tableau, and Looker have enabled data teams to expedite tasks like ETL processes, pipelines, and data transformations. These frameworks allow organizations to build data products more effectively, taking full advantage of managed cloud services, which are faster and more seamless than on-premises solutions.

Observability

Once services or products are built and deployed on managed platforms, ensuring their performance and reliability becomes paramount. Observability tools like New Relic, Datadog, AppDynamics, Dynatrace, Grafana, and ELK have become essential in software engineering. These tools provide real-time insights into events, anomalies, availability, and serviceability metrics such as throughput, latency, error rates, and saturation.

In the data domain, tools like Bigeye, Monte Carlo, and Great Expectations address similar challenges. They enable organizations to monitor data products and ensure they perform as expected by leveraging metrics, logs, traces, and impact analysis.

Data Reliability Engineering (DRE) focuses on treating data quality as an engineering problem to build highly reliable and scalable data products. It establishes essential practices within the data ecosystem to address modern challenges.

The growing importance of DRE can be attributed to the following factors:

DRE addresses these challenges by introducing a combination of data suites, protocols, and a quality-focused mindset to resolve operational issues in analytics and ML/AI. These practices are particularly effective in managing the operational layers of data products.

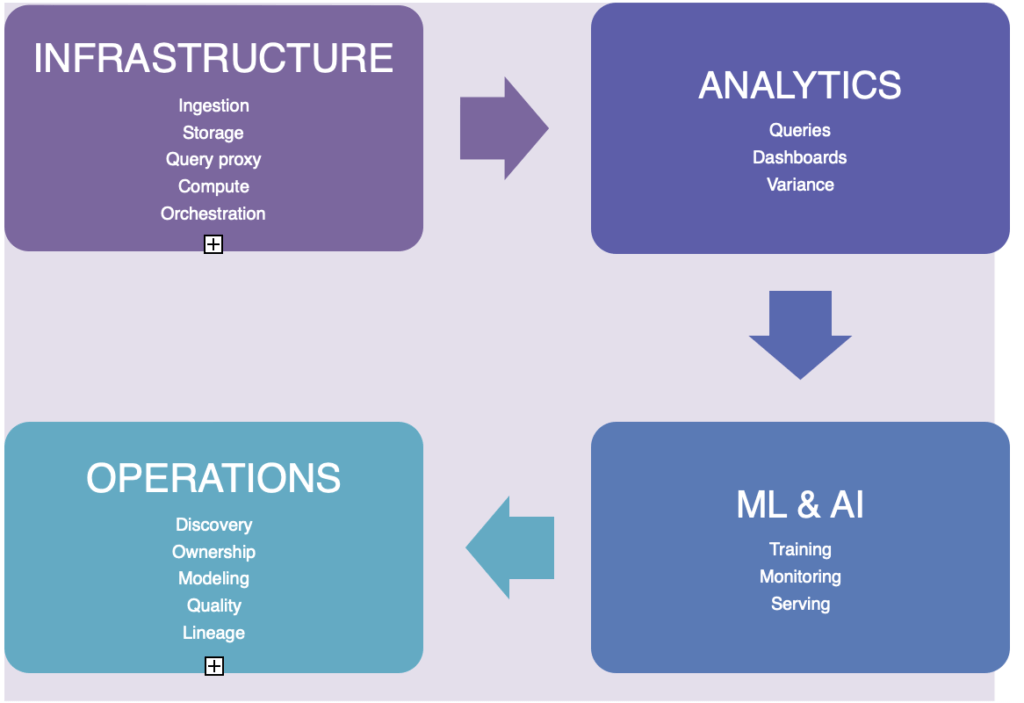

Refer to Fig. 1.1 for additional insights.

Fig 1.1 Data Space

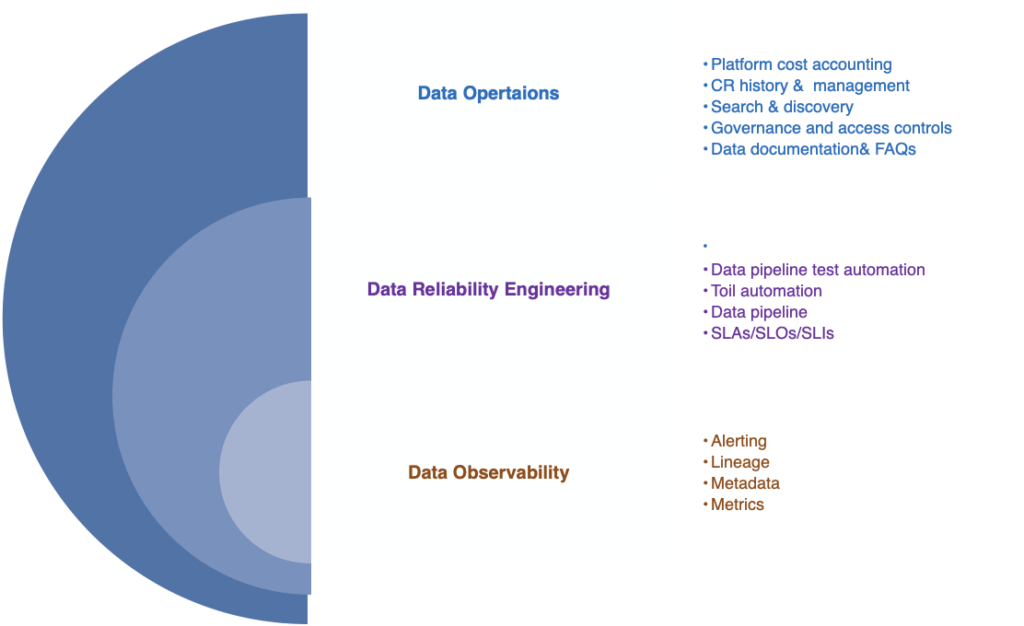

Data Reliability Engineering (DRE) is a specialized subset of Data Operations, focused on managing data and providing essential insights about it. As shown in Fig. 1.2, DRE encompasses several components, including Data Observability, which adheres to Service Level Experience (SLX) principles.

Key elements include:

Fig 1.2 Data Layers

Here the DRE team do follow the key practices which have been evolved in a timely manner to respond appropriately and ensure the data is of great quality for use in key applications without losing the iteration velocity of the data environment.

References

e.g.: DRE SLOs & improvements below from the source and for more reference please follow SLOs & Data Pipeline Improvements

Data Freshness

Most pipeline data freshness SLOs are in one of the following formats:

Data Correctness

A correctness target can be difficult to measure, especially if there is no predefined correct output. If you don’t have access to such data, you can generate it. For example, use test accounts to calculate the expected output. Once you have this “golden data,” you can compare expected and actual output. From there, you can create monitoring for errors/discrepancies and implement threshold-based alerting as test data flows through a real production system.

Data Isolation / Load Balancing

Sometimes you will have segments of data that are higher in priority or that require more resources to process. If you promise a tighter SLO on high-priority data, it’s important to know that this data will be processed before lower-priority data if your resources become constrained. The implementation of this support varies from pipeline to pipeline but often manifests as different queues in task-based systems or different jobs. Pipeline workers can be configured to take the highest available priority task. There could be multiple pipeline applications or pipeline worker jobs running with different configurations of resources, such as memory, CPU, or network tiers and work that fails to succeed on lower provisioned workers could be retried on higher provisioned ones. In times of resource or system constraints, when it’s impossible to process all data quickly, this separation allows you to preferentially process higher-priority items over lower ones.

End-to-End Measurement

The end-to-end SLO includes log input collection and any number of pipeline steps that happen before data reaches the serving state. You could monitor each stage individually and offer an SLO on each one, but customers only care about the SLO for the sum of all stages. If you’re measuring SLOs for each stage, you would be forced to tighten your per-component alerting, which could result in more alerts that don’t model the user experience.

Discover how Salesforce Education Cloud, Data Cloud, and Agentforce enable AI-driven student engagement and intelligent campuses with Altimetrik.

Learn how a fragmented call center evolved into an intelligent service platform, boosting operational efficiency, enhancing customer experience, and delivering actionable insights at scale.

Regional banks are under pressure to improve operating economics without taking on avoidable transformation risk. The FDIC said industry net income fell 2.0 percent quarter over quarter in Q4 2025, driven mainly by higher noninterest expense, while the OCC warned that prolonged use of legacy systems can increase outages, security vulnerabilities, maintenance challenges, and resilience […]

Altimetrik is committed to protecting your personal information. To apply for a position, you will need to provide your email address and create a login. Your information will be used in accordance with applicable data privacy laws, our Privacy Policy, and our Privacy Notice.