Reimagining Talent as Infrastructure: Building the AI-First Enterprise

AI-powered talent ecosystems are redefining enterprise success driving faster hiring, agile workforce mobility, ethical AI governance, and measurable growth.

AI is transforming industries such as healthcare, manufacturing, and IT, driving innovation and improving efficiency at an unprecedented pace.

While it has proven to reduce human errors and analyze trends for faster decision-making, it still struggles to earn users’ trust in its output. Additionally, further implementation of AI technology in businesses requires ensuring AI safety at all stages.

In this blog, we’ll dive deep into AI safety, explore why it is crucial, and discuss how to identify and address threats using the right tools.

AI safety involves protecting AI’s development and deployment and safeguarding against attacks, misuse, and accidental harm.

With proactive addressing of technological, ethical, and societal concerns, it strives to create reliable and robust AI systems.

Let’s categorize all risks into these three areas:

Addressing potential security threats in AI development involves identifying risks to individuals, organizations, and ecosystems. This requires innovative solutions to ensure the responsible deployment of advanced AI systems.

Next, let’s explore the possible security threats in AI and discuss how to address them with the right tools.

From data collection to deployment, various safety and ethical issues may arise. To address these concerns, many innovative solutions are emerging to mitigate risks and ensure responsible AI deployment.

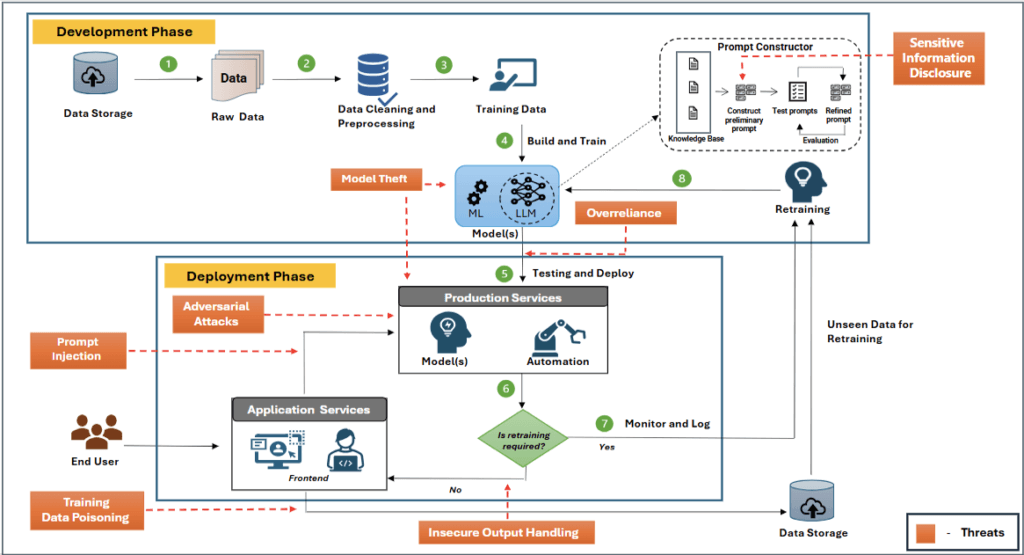

Here’s an example of architecture to illustrate potential security threats encountered during the development and deployment phases of an AI system.

The table below details the threats identified in the architecture, along with possible measures and tools to mitigate them.

| Threats | Risk Mitigators (Tools and Techniques) |

| Sensitive Information Disclosure: AI models may unintentionally expose confidential data, leading to privacy breaches. | Protecting Sensitive Data: Encrypting sensitive input data and adhering to regional data regulations (e.g. GDPR) to safeguard privacy and prevent unauthorized access. Tools: Masked AI / Presidio |

| Insecure Output Handling: Accepting AI model outputs without scrutiny can expose backend systems to vulnerabilities. | Content Filtering: Removing harmful or inappropriate content to uphold safety and integrity. |

| Overreliance: Relying too much on AI models without understanding their limits or having human oversight can cause errors or unforeseen outcomes. | Human-in-the-Loop Monitoring: Establish systems for human oversight to prevent undesirable outcomes from overreliance on AI. |

| Training data poisoning: Manipulating training data can compromise model integrity, introducing biases or vulnerabilities that undermine system effectiveness and reliability. | Data Filtering: Analyzing data to remove harmful information, ensuring accurate and reliable results. Tools: Evidently AI, TFDV (TensorFlow Data Validation) |

| Prompt injection: Involves manipulating AI/ML models, especially large language models (LLMs), through crafted inputs to induce unintended actions. (e.g. Jailbreaks Prompt – (DAN) Do anything now) | Prompt Filtering: Process of mitigating data leakage and safeguarding sensitive data while using LLMs. Tools: Prompt Injections filtering using LLM’s |

| Model Theft: Unauthorized access to AI models risks intellectual property theft, loss of competitiveness, and misuse of stolen models. | Access Control: Regulating data and model access through user permissions and authentication like two-factor authentication to prevent unauthorized access and theft. |

| Adversarial Attack: A strategy to deceive AI into errors or misclassifying data by manipulating input subtly. | Adversarial Testing: Rigorous testing to find and fix AI system vulnerabilities, simulating real-world attacks for improved robustness and security. Tools: ART – Adversarial Robustness Toolbox |

These tools for managing AI system threats align well with the management phase of the AI RMF (AI Risk Management Framework) developed by NIST.

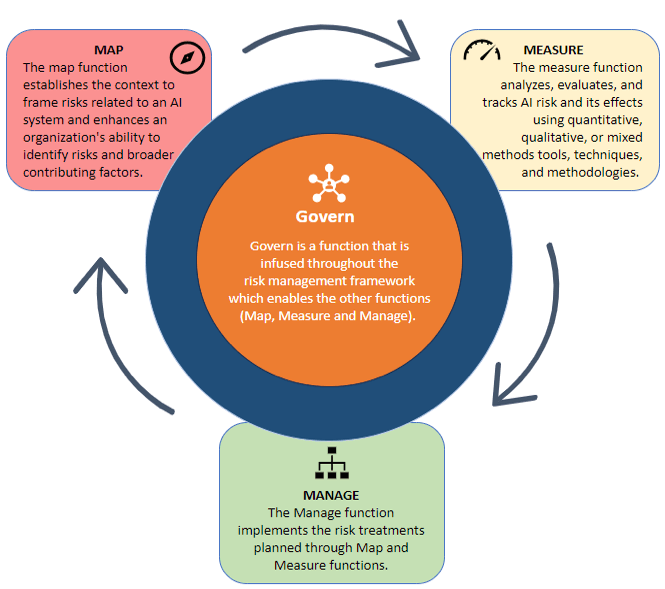

AI RMF is a structured process for identifying, assessing, and mitigating risks throughout the AI lifecycle. Therefore, it’s a core to enhance overall risk management efficacy.

It comprises four functions: Govern, Map, Measure, and Manage. Let’s understand each function and its role in a nutshell below.

Govern:

Map:

Measure:

Manage:

AI RMF offers a comprehensive strategy to tackle AI risks across a range of use cases and sectors and emphasizes responsible and trustworthy AI development and deployment.

Conclusion

Safety is paramount in the rapidly evolving field of AI, and robust measures are crucial for responsible use. By balancing innovation with ethics and safety, we can ensure a secure future for AI. Remember, AI safety is not a barrier to advancement but a necessary complement.

AI-powered talent ecosystems are redefining enterprise success driving faster hiring, agile workforce mobility, ethical AI governance, and measurable growth.

Embedded finance isn’t merely a product evolution, it’s a structural shift in how financial services are consumed, delivered, and monetized. For banks, embedded finance must be treated as a strategic opportunity to lead ecosystem value creation and not a defensive response to fintech disruption.

Generative AI is transforming supply chains by reducing decision latency, enabling real-time scenario planning, and turning supply chain intelligence into a strategic business enabler. Discover how GenAI reshapes planning, resilience, and growth.

Altimetrik is committed to protecting your personal information. To apply for a position, you will need to provide your email address and create a login. Your information will be used in accordance with applicable data privacy laws, our Privacy Policy, and our Privacy Notice.