Reimagining Talent as Infrastructure: Building the AI-First Enterprise

AI-powered talent ecosystems are redefining enterprise success driving faster hiring, agile workforce mobility, ethical AI governance, and measurable growth.

In today’s rapidly evolving data-driven world, ensuring data quality has become a paramount concern for organizations across various industries. Data quality refers to the degree to which data meets requirements and expectations. It encompasses accuracy, completeness, consistency, timeliness, and reliability, ensuring suitability for analysis and decision-making.

This is where building a SSOT (single source of truth) is incredibly important for any organization to ensure reliability, auditability and transparency. As part of our Digital Business Methodology, we extensively leverage platforms such as Snowflake to help our clients build SSOTs as aligned to their business needs and goals.

While building out a SSOT solution leveraging Snowflake, we realized that there are not many tools available for performing data quality checks and available ones are either not compatible, expensive or did not meet a complex set of requirements. This led us to develop a custom data quality framework within Snowflake using stored procedures.

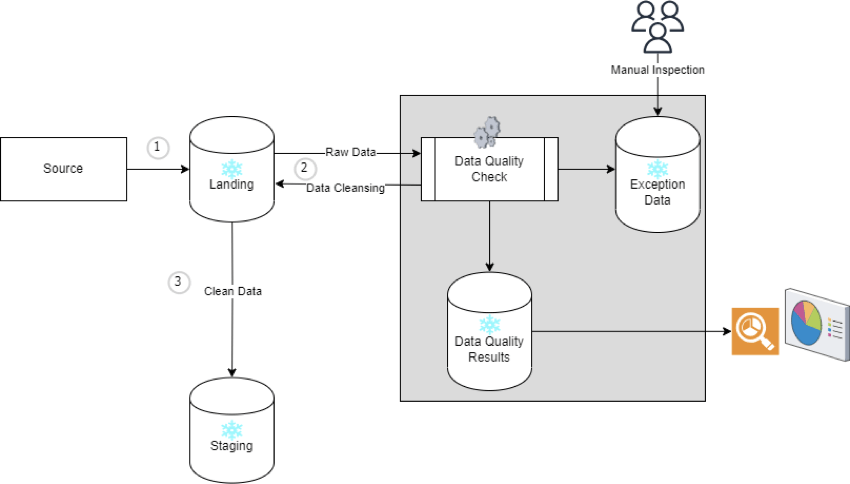

We developed this framework as an independent piece that is easily pluggable to data pipeline without disturbing any of the existing data mappings. The high-level flow is as follows:

Below is the high-level design:

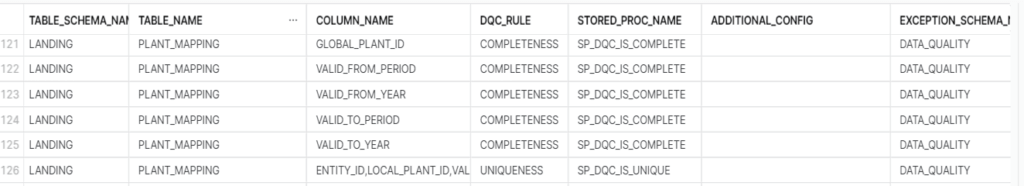

This is centralized location for all the configurations or rules that needs to be enforced. It is possible that there are different data check needs in the same table on different columns. The metadata configuration maintains all the data quality checks in one place which is referred for running the Data Quality (DQ) validation throughout. Structure of this table can vary as per the requirement. We have maintained details like table name with schema, column name for which rules must be executed for, stored procedure name which has the code for executing the rule and a few flag indicators like stop load if rule fails and if capturing and cleansing of exception data is needed or not.

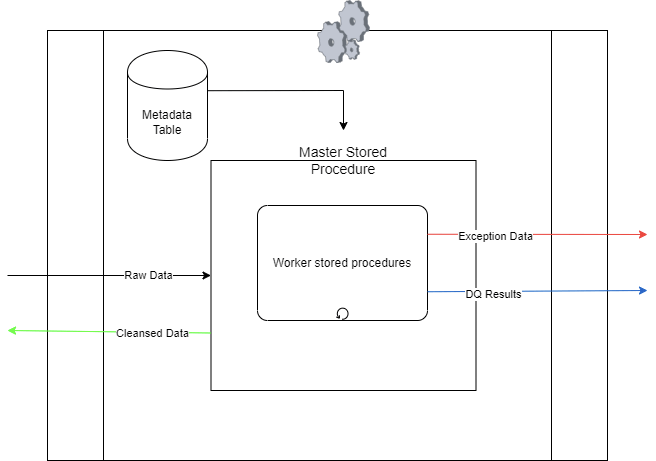

The master stored procedure acts as the central control in the Snowflake data quality framework. It triggers data quality checks for specific tables using input parameters for schema and table names. These parameters link to metadata containing applicable rules.

Using metadata, the master procedure coordinates data quality check procedures which accesses data from the designated table, performs quality checks, and logs the results accordingly.

Monitoring execution status, the master procedure halts the entire data quality check process if suspension-triggering rule is failed.

As per setup, the master procedure can also initiate data table clean-up, eliminating problematic records.

Each DQ check is executed by a worker that underlying uses a stored procedure. The controller/master orchestrates these workers as per the rules defined in the metadata configuration.

Below is an example to check completeness. Similar workers can be created for other DQ checks namely uniqueness, subset, double entry, checking table structure etc.

SP_DQC_IS_COMPLETE is used to check if the column has NULL values and populate the result accordingly to the results table. This stored procedure can be called individually as well. The stored procedure takes inputs from the metadata table that is passed as parameters.

The framework facilitates easy extensibility. Adding new rule or code is quite easy. A new stored procedure needs to be created with the rule execution logic and the same needs to be configured in the metadata table. This is further simplified by utilizing a common template across all the worker stored procedures. This template incorporates preset parameters, along with logic for logging data quality outcomes and capturing exception data in a dedicated table. By integrating new data quality check logic and making minor adjustments to existing procedures, the rule check procedure becomes readily adaptable.

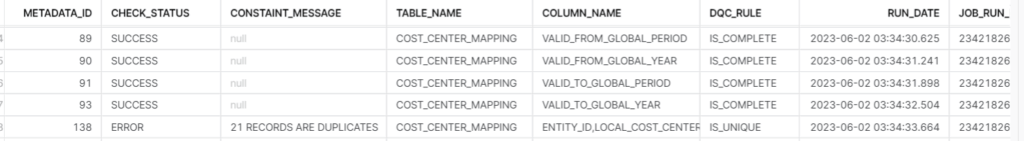

This table captures results of the rules executed with corresponding details. Details are logged irrespective of success or failure in data quality. This table structure can be modified as per the requirement. We have maintained details like rule id to link it back to metadata table, table name, status and message. Message is only populated when there is a failure, and it will contain the record count of failed records. Along with this we have maintained a few audit columns like Date of execution and batch id of data for which the rule is executed.

The DQ check framework developed for Snowflake data pipeline addressed our core DQ needs without relying on any external solution. The framework was extensible thus enabled adding new validation rules as the project progressed. By leveraging Snowflake’s scalability and parallel processing capabilities, the framework efficiently handled large volumes and varieties of data. It seamlessly integrated with the data pipeline, enabling data quality checks at various stages. Customizable quality rules and automation reduced manual effort, providing a systematic approach to validate data.

1 Snowflake schema to store raw data from source

2 Snowflake schema to store cleansed data post data quality checks and validations

AI-powered talent ecosystems are redefining enterprise success driving faster hiring, agile workforce mobility, ethical AI governance, and measurable growth.

Embedded finance isn’t merely a product evolution, it’s a structural shift in how financial services are consumed, delivered, and monetized. For banks, embedded finance must be treated as a strategic opportunity to lead ecosystem value creation and not a defensive response to fintech disruption.

Generative AI is transforming supply chains by reducing decision latency, enabling real-time scenario planning, and turning supply chain intelligence into a strategic business enabler. Discover how GenAI reshapes planning, resilience, and growth.

Altimetrik is committed to protecting your personal information. To apply for a position, you will need to provide your email address and create a login. Your information will be used in accordance with applicable data privacy laws, our Privacy Policy, and our Privacy Notice.